Hash Tables

A comprehensive guide on various strings operations and techniques used in algorithm design, particularly for solving problems efficiently - hash tables - collisions

Intro.

A nice simple explanation in : https://medium.com/basecs/taking-hash-tables-off-the-shelf-139cbf4752f0 about hash tables that explains :

Hash tables are like a super-organized bookshelf in a library. Imagine you have a lot of books and you need to find one quickly. If you just throw your books randomly on the shelf, finding a specific book would take a long time, as you'd have to look through each one. This is like searching in an array or a linked list - it's slow because you have to check every item, which takes linear time (O(n)).

Now, imagine instead you have a special method to organize your books. You use a formula (hash function) that takes the title of a book and tells you exactly where on the shelf it should go. This is what a hash table does. It uses the hash function to decide where to store each book, so when you need to find a book, you use the same formula to know exactly where to look. This makes finding books super fast, almost instant, which is why we say it has constant time complexity (O(1)) for searching.

However, sometimes two books might end up in the same spot (a collision). There are ways to handle this, like adding another shelf (separate chaining) or finding the next available spot (open addressing). In real life, like phonebooks or libraries, hash tables are great because they let you find things quickly without having to search through everything. They are efficient and save a lot of time, especially when you have a lot of data.

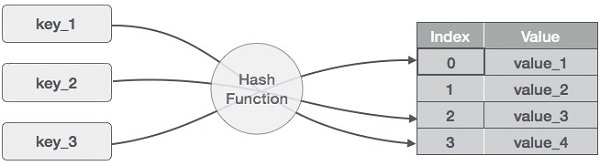

Basic Concept

A hash table stores key-value pairs. It uses a hash function to compute an index into an array of buckets or slots, from which the desired value can be found. Ideally, the hash function will assign each key to a unique bucket, but most hash table designs assume that hash collisions—situations where different keys hash to the same bucket—can occur.

Components

1. **Hash Function**: Converts a key into an array index. A good hash function should distribute keys uniformly across the array, minimizing collisions.

2. **Buckets or Slots**: The array where the actual data is stored.

3. **Collision Resolution**: A strategy to handle two keys that hash to the same index (common methods include chaining and open addressing).

Example

Let's consider a simple example of a hash table that maps names (strings) to ages (integers). Assume we have a hash function that converts a string to an integer by summing the ASCII values of its characters and then taking the modulo with the size of the array.

1. **Hash Function**: `hash(name) = sum(ASCII values of characters in name) % array_size`

2. **Array Size**: 10 (for simplicity)

Operations

- **Inserting ("Alice", 25)**

- Compute hash: `hash("Alice") = (A + l + i + c + e) % 10`

- Suppose it gives index 3. We store the value 25 at index 3.

- **Inserting ("Bob", 30)**

- Compute hash: `hash("Bob") = (B + o + b) % 10`

- Suppose it gives index 6. We store the value 30 at index 6.

- **Looking up for "Alice"**

- Compute hash: `hash("Alice") = (A + l + i + c + e) % 10`

- Look at index 3 and find 25.

- **Handling Collision**

- Suppose we add "John" and `hash("John")` also gives 3. This is a collision.

- If we're using **chaining**, we'll make a linked list at index 3 containing both "Alice" and "John".

- If we're using **open addressing**, we'll find another open slot in the array for "John".

Efficiency

- **Best Case**: O(1) for insertions, deletions, and lookups.

- **Worst Case**: O(n), particularly if there are many collisions or the hash function is poor.

Practical Applications

- Implementing associative arrays.

- Database indexing.

- Caching.

Important Considerations

- A poor hash function can lead to many collisions, drastically reducing the efficiency of the hash table.

- The size of the array should be chosen carefully, usually a prime number not too close to a power of two.

- The load factor (number of entries divided by the number of buckets) should be monitored to resize the hash table when necessary.

Hash tables are a clear example of a trade-off between time and space complexity: they use more memory than an array to enable faster access.

Resources

https://www.coursera.org/lecture/data-structures-optimizing-performance/core-hash-tables-m7UuP